So where's the science..?

Message boards :

SETI@home Staff Blog :

So where's the science..?

Message board moderation

| Author | Message |

|---|---|

Matt Lebofsky Matt Lebofsky Send message Joined: 1 Mar 99 Posts: 1444 Credit: 957,058 RAC: 0

|

As time goes on more SETI@home pariticipants are frustrated with the perceived lack of scientific progress. So what's the deal? Keep in mind the essence of the science is actually really simple. We get lots of data. The function of the SETI@home clients is to reduce this data. They convert a sizeable chunk of frequency/time data into a few signals. These signals alone aren't all that interesting. It is only when similar signals (similar in frequency, shape, and sky position) are found over observations that happen at different times. The process of "matching" these similar signals is the crux of the problem SETI attempts to solve. During the first five years of SETI@home (the "Classic" years) we never really had a useable science database. Signals were gushing into our servers which, at the time, could barely handle the load of simply inputting them into the database, much less validating the actual science before doing so. Technology improved and we have somewhat better servers (and the more streamlined BOINC server backend) so that we can validate the science and input incoming signals in "real time" (i.e. as fast as they come in). Nevertheless, the result is we still have an unwieldy science database with almost a billion signals in it, and we're adding two million more every day. But we had to get to this point where we are today, which meant creating the first "master science" database of validated signals from Classic via a long and painful process of "redundancy checking." Then we had to merging the Classic and BOINC science databases into one. We then had to migrate the data onto a bigger, better server. We still have yet to do the big "database correction" where we clean up the data for final analysis. Each of these projects took (or will take) at least several calendar months. Why? Well, there are lots of important sub-projects and "getting your ducks in a row." Plus a lot of care and planning goes into moving this data around which vastly slows down progress - for example, each big step along the way requires backing up all the data, which takes an uninterrupted calendar day or two. We don't want to be too cavalier with our master science database, you know? Plus we don't do anything too crazy towards the end of the week so we don't have to deal with chaos over the weekend. So often we're waiting until Monday or Tuesday to do the next small step. Now factor in the lack-of-manpower issue. I disappear for months at a time to go play rock star (SETI@home is my day job - in return for being overworked/underpaid while I'm here, I get incredible flexibility to do whatever I want whenever I want). Jeff goes backpacking in the Sierras. Eric has a zillion other projects demanding his time, as does Dan. Frequenty somebody is sick or dealing with personal issues and out of the office. When we're a "man down" every "big" project comes to a standstill, as we barely have enough people to maintain the day-to-day projects. And when an unpredictable server crisis hits the fan all bets are off. Nevertheless, we did manage to squeeze out one set of candidates back in 2003 for reobservation. Basically, we were given a window of opportunity to observe at Arecibo positions of our choosing, and so we all dropped everything and threw all our effort into scouring our database for anything interesting to check out. The database was much smaller then. We also still had some funds from the glorious dot.com donation era, so our staff was a bit larger (we had scientific programmer Steve Fulton and web programmer Eric Person working full time, as well as various extra students). While the science behind this candidate run was sound, we didn't have much time to "do things right" so the code generated to scour our database and select candidates was basically a one-shot deal. The code did the job then, but is basically useless now - something we were well aware of at the time but due to time constraints couldn't do anything about it. So where are we now and what's next? Only relatively recently do we have our science database on a server up to the task of doing something other than inserting more signals. As mentioned above we need to do a big "database correction" - I'm sure more will be written up about this in due time. Then we need to develop the candidate hunter, a.k.a "persistency checker" which runs in real time. This latter project recently got an advance kick in the butt thanks to a new part-time programmer (Daniel) working on skymaps for our website - this shares a lot of the code with the persistency checker. As well we have the new multibeam receiver on line and collecting lots of data. Tens of terabytes so far, in fact, which will hopefully be distributed to y'all in the coming weeks/months. I'll finish up by stating that the actual science, while moving at glacial speeds, is not going stale. Remember these signals are potentially coming from light years away - so a few years' delay isn't going to hurt the science. It will hurt user interest, though, which is always a concern and a source of frustration for us - that's a topic for a later time. - Matt -- BOINC/SETI@home network/web/science/development person -- "Any idiot can have a good idea. What is hard is to do it." - Jeanne-Claude |

Searcher Searcher Send message Joined: 26 Jun 99 Posts: 139 Credit: 9,063,168 RAC: 15

|

Nice post. Should there be a seti2 client thats purpose it to analyze the science database much the way we look at the raw data looking for important signals?

|

|

Olivier ROGER-SOULILLOU Send message Joined: 28 May 06 Posts: 37 Credit: 501,017 RAC: 0

|

reading all that,and seeing that ,at the beginning, seti was not really perfect ,speaking about ways of data's treatment, needing now to do one main database, checking it for redundancies, cleaning it, having to do reobservations... it's an obvious fact that you need rather IBM's BluGenes or NASA's Columbia or Los Alamos supercomputers... than even all our computers in the world. Finally why not, some are available... anyway, if it's true that i noticed that people were rather frustrated about years of delay ,crunching, for this kind of research, patience is THE word... perhaps could it be more understood if more explained ,about results for instance,showing some,even if not sure .. best regards from france. Dr.O ROGER-SOULILLOU |

Matt Lebofsky Matt Lebofsky Send message Joined: 1 Mar 99 Posts: 1444 Credit: 957,058 RAC: 0

|

Should there be a seti2 client thats purpose it to analyze the science database much the way we look at the raw data looking for important signals? A related question is: what kind of problem is distributed computing good at solving? The first pass of SETI@home data reduction is, obviously, great for distributed computing. Each workunit is a tiny chunk of sky/frequency that needs to be whittled down to its most important features - to do so requires no knowledge of any of sky/frequency chunks, so each computer can work separately and efficiently. However, to find persistent signals, you need to have access to the *entire* database. Think of it as a game of concentration. You need the whole deck to play. I don't think people would like us sending them workunits that are a terabyte in size. Which leads to the question: Why not divide the sky up into a finite set of "pixels" and then just send the signals that fall into each pixel to other computers to process. Well.. that's actually the problem we're working on now (hence the concurrent work with skymaps). In order to make these pixels useful (i.e. based on the size of our receiver's "beam") we end up with about 15 million pixels. I think that's the right number, and if it isn't it's close enough. Well, that means on average we'll have 66 signals per pixel. Not very many. So it would be easier/faster to do this processing ourselves rather than bundle it up, send it out for analysis, and then validating the results. In fact, once the analysis engine is revved up, there's no reason we couldn't do this in real time, which is the plan. Okay I might have the math messed up in this last paragraph, but you get the idea. Basically, for final analysis, we don't need CPU power - we need human brain power for programming/analysis. - Matt -- BOINC/SETI@home network/web/science/development person -- "Any idiot can have a good idea. What is hard is to do it." - Jeanne-Claude |

John Clark John Clark Send message Joined: 29 Sep 99 Posts: 16515 Credit: 4,418,829 RAC: 0

|

Matt many thanks for the background, from Classic to the present. More importantly where the project is planning to go. This shows SETI to be a real project, with it's many twissts and turns. But frustrated by funding! Keep up the good work, and thanks for the insights! It's good to be back amongst friends and colleagues

|

|

Wander Saito Send message Joined: 7 Jul 03 Posts: 555 Credit: 2,136,061 RAC: 0

|

Hi Matt, This is a very good explanation indeed. I don't think I was even aware of the whole process involved into analyze all this data we've been crunching all this time. Maybe a revised version of your post, with some visual aids, could go a long way explaining what really SAH does and ultimately reducing the so-called frustration level. Regards, Wander |

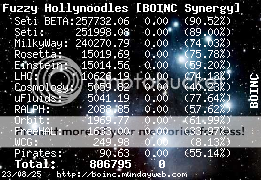

Fuzzy Hollynoodles Fuzzy Hollynoodles Send message Joined: 3 Apr 99 Posts: 9659 Credit: 251,998 RAC: 0 |

Thank you Matt for explaining this to us. I hope your posts make it more evident to people about the importance of donating money and hardware to the project. "I'm trying to maintain a shred of dignity in this world." - Me

|

Orgil Orgil Send message Joined: 3 Aug 05 Posts: 979 Credit: 103,527 RAC: 0

|

What I suppose is we might likely got into 10 or 50 or 5000 years long project even more longer. There is another fact most people always ignore is why when russian and american presidents first signed a mutual agreement besides stopping the cold war they declared something very weird stuff like extraterrestrial invasion thing that they would mutually cooperate against it, that is very weird. They were Reagan and Gorbacheiv. Can somebody show us that agreement document? I hope I am sure of the meaning but never fully read the original agreement paragraph. This might be a joke or fact I am not sure but likely considering. :) Sorry for interrupting your gamma ultra rays talk but mine is still in the subject direction. Mandtugai! |

ShvrDavid ShvrDavid Send message Joined: 23 Apr 02 Posts: 30 Credit: 264,420 RAC: 0

|

Hmm... Your description of the servers leaves me with more questions, than answers... I wouldn't look at the signals as simple ones... If you do, you will probably miss the obvious... I will try to explain what I mean... Any program that has to crunch a database can normally only find what it is programmed to look for... Programmers such as John Koza took a different approach to programming, in th fact that the programs can rewrite themselves... What I am refering to is called "Genetic Programming"... It is normally used to refine an idea by creating "offspring" from something, then looking to see if there was an improvement on the offspring, or if it is worse... This can be applied to looking for the unknown in a data set as well... Not only are you looking for repeat signals, but you also looking for signals that are different than the noise around them... Think of them as not of natural origin... When you write the program to crunch the database, you might want to keep that in mind... |

Diego -=Mav3rik=- Diego -=Mav3rik=- Send message Joined: 1 Jun 99 Posts: 333 Credit: 3,587,148 RAC: 0 |

Many many years from now, the man in charge of the SETI project will adress the crowd during the celebration after the first extraterrestrial signal was found, confirmed in its source and planet of origin, and answered. At the end of his speech, this man will say something like: "...and let's not forget about the people that stood by the project during the first stormy years, and overcame the frustration and the incertitude. We wouldn't be here today if it wasn't for them." I know it probably won't happen in my lifetime. I'm just happy to continue to donate one more grain of sand to help build the foundation of what's to come. Hey Matt, it's cool being the frustrated stubborn pioneer. ;) For those about to crunch, I salute you. /Mav We have lingered long enough on the shores of the cosmic ocean. We are ready at last to set sail for the stars. (Carl Sagan) |

enzed enzed Send message Joined: 27 Mar 05 Posts: 347 Credit: 1,681,694 RAC: 0

|

Hmm... Hello ShvrDavid: You mirror a few thoughts that have been bouncing around in my head for a little while. A program does what it is told to, to work any differently requires a change in mindset, firstly by the programmer and then possibly also the hardware {depending on how radical you want to go}. The possible ebb and flow of longduration cyclic anomalies is certainly possible for this type of project.... if you have a signal that is actually too weak to break through the background "noise" and be identified as a standout spike, then looking for another form of interaction pattern often helps. eg if a signal is too weak to be detected directly it may still be strong enough to cause swelling and rolling in the background disturbance patterns... if you follow my drift... sort of a cross pattern of smaller waves on the sea top carried along with the normal waves.. so to speak gn's -genetic apps tend to be more of a adaptation and fittest survival mode program.. although i certainly am not ruling them out. nn's -neural networks can be trained to look directly for the types of additional noise riding within a noise signal. sort of like thinking about amplified noise on a noisey background, noise interferring with noise. its more a pattern recognition problem than a straight high amplitude spike detection. sort of tied in with some stuff here. and Diego... you are right... time is sometimes not on our side... but then you never know...! cheers |

William Rothamel William Rothamel Send message Joined: 25 Oct 06 Posts: 3756 Credit: 1,999,735 RAC: 4

|

The signal processing is well known by now and has been for decades --it is not realistic to think that a hueristic can spring from a program to find true data amongst the noise. The data processing or "crunching" is to look for signals that have a power spectrum and distribution that are different from random noise which has a well defined spectrum. Purposive signals would stand out assuming that they have sufficient signal power so that correlation techniques could lift them out of the noise. The doppler chirp processing is also essential to account for motion in the signal source. A more clever use of processing might be to look at Pi times the Lyman line and then look for information in the signal such as a counting of the prime numbers and then a dictionary decoded through video pictures on some scanning scheme. These are all long shots but then we need to get better at looking. |

enzed enzed Send message Joined: 27 Mar 05 Posts: 347 Credit: 1,681,694 RAC: 0

|

The signal processing is well known by now and has been for decades --it is not realistic to think that a hueristic can spring from a program to find true data amongst the noise. The data processing or "crunching" is to look for signals that have a power spectrum and distribution that are different from random noise which has a well defined spectrum. Purposive signals would stand out assuming that they have sufficient signal power so that correlation techniques could lift them out of the noise. Hello William: Yes, the processing surely is working hard on identifying a signal that is itself powerful enough to be recognised as a signal. I am merely musing on the possibilities of signals that are not powerful enough to be seen as such by any straight detection scheme/program and at an acceptable power level...and im not criticising the project in the least, or else i would not be here. Think for a second on the problem a few years ago of detecting submarines... if you have enough microphones in place you can hear their props beating the water... and then electro-hydrodynamic flow changed the rules.. no props, just water jets. if they are silent can you detect them... yes... by looking for disturbance patterns in the waves, or impulse/pressure waves with much longer wave lengths than the original, generated by their movement. Your correct in saying that there will not be "true data" amongst the noise, its far far too small a signal to extract anything meaningful. I am musing on the interference pattern that may be present at longrange, an anomalous oscillation of normal background noise may/may-not indicate the presence of a relatively sub harmonic signal being scattered and absorbed by the gas clouds etc. |

ML1 ML1 Send message Joined: 25 Nov 01 Posts: 21183 Credit: 7,508,002 RAC: 20

|

Yes, the processing surely is working hard on identifying a signal that is itself powerful enough to be recognised as a signal. I am merely musing on the possibilities of signals that are not powerful enough to be seen as such by any straight detection scheme/program and at an acceptable power level... s@h already state that we are processing the data to look for signals that purposefully are intended to be found. The present search scheme is based on that assumption and looks for whatever is most clearly "artificial" that stands out against the natural background of the cosmos. Unfortunately, we're a very long way away from having the capability to start doing anything more advanced such as "spread spectrum" decoding or sniffing for even rudimentary modulated signals. We're looking for beacons only, and that's hard enough! If you're actually talking about "sensitivity", then s@h are already doing clever tricks to integrate over various time periods to pull any "non-randomness" out of the noise. The best trade-off has been made in s@h-enhanced to boost the sensitivity versus time to crunch through the data. Slightly more sensitivity could be gained but at the expense of far greater processing time that just gives ever diminishing returns. The new ALFA data is the way to go with (x6? forgot the number) sensitivity in the first place. Keep searchin', Martin See new freedom: Mageia Linux Take a look for yourself: Linux Format The Future is what We all make IT (GPLv3) |

enzed enzed Send message Joined: 27 Mar 05 Posts: 347 Credit: 1,681,694 RAC: 0

|

Yes, the processing surely is working hard on identifying a signal that is itself powerful enough to be recognised as a signal. I am merely musing on the possibilities of signals that are not powerful enough to be seen as such by any straight detection scheme/program and at an acceptable power level... Good morning Martin Just to clear the air, I am very happy with what is being done here at s@h, in these days of a declining interest in science it is hard to get anything of real interest done. I was just musing on the possibilities that exist. You are correct in that the best track to take is to look for an obvious signal, even that process is hard enough. I suppose what set me off was a few other comments on the boards here and the inter-relationship they had to some processes that I am currently looking at. I think both of us suffer from a common problem of either a lack of hardware, or the time available to us exclusively to use it, to make some serious headway. now theres a thought!!!... excuse my obvoius ignorance of your operational status, but you folk are linked into an educational institute of some sort??..arent you? ... if so with some string pulling you may be able to effectively do what I do. Each "holiday" or semester break or what ever you folk call it, you "may" be able to use all the unused/spare computers and bind them into a single parallel processing cluster. There are various bootable "cluster" cdroms available {one called BCCD comes to mind} that dont touch/use the harddrive of the box you boot them on at all, they just allow you to grab its cpu and ram and networking capabilities. You can control the cluster from a master machine, then just reboot the pc when finished and it runs as normal. the cdrom does everything for you, networking, dhcp, dynamic cluster joining, automatic job distribution, load balancing... gaining a few hundred cpu's makes a lot of difference... |

|

1mp0£173 Send message Joined: 3 Apr 99 Posts: 8423 Credit: 356,897 RAC: 0

|

According to BOINCstats.com, there are 1,342,994 hosts currently crunching SETI. So, adding "a few hundred" would be something like a 0.05% boost (5 hundredths of one percent) -- assuming that they're "average" machines, whatever that means. |

|

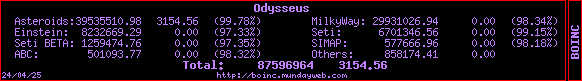

Odysseus Send message Joined: 26 Jul 99 Posts: 1808 Credit: 6,701,347 RAC: 6

|

According to BOINCstats.com, there are 1,342,994 hosts currently crunching SETI. No; that’s the number of hosts that have ever been attached to the project. At the moment their figure for currently active hosts (those that have been granted credit in the last month) is 320,062. Likewise, of 605,982 total participants only 180,955 are considered active.  |

enzed enzed Send message Joined: 27 Mar 05 Posts: 347 Credit: 1,681,694 RAC: 0

|

Hello folks A possible cross connection of thoughts here, sorry folks to those who didnt catch up on the drift of the discussioin.. so ..I was NOT referring to adding a few hundred computers to the S@H project as such. What I meant was if anyone wanted to do some specialised work as per ML's text, and do a search with different parameter sets, - Aim: To analyse longer term data patterns, or other patterns.. {not just go over the same ground again}.. - Requirement: They have access to the S@H database "or had it on a dvd's or whatever". - Hardware: They "could" have a have a high speed, high throughput, high performance system to do the analysis on, buy utilising a universities or who-evers computers over the holidays {while they are "not used"}, and join them into a "processing cluster" that effectively makes them one single parallel processing system. What I was simply musing on was the possibility to look at completly different parameters in short burst runs on a high performance system...just to have a look and see what pops out... so you see its a different beast from the one presently operational. s@h cant do it at the moment because of standard issues (eg A system that could do it.. you need high throughput to do this stuff, Expense.. you cant buy this sort of processing power in one box anyway... unless you can afford a supercomputer... and who can?.. so the next best thing is creating a supercomputer level of processing by using mass parallel distribution. There was no slight intended to S@H who do a wonderful job of looking for signals Now tell me you guys and girls at seti, you scientists and technicians... if you had the chance to get your hands on a cluster, even for short bursts... would you do it.? |

Orgil Orgil Send message Joined: 3 Aug 05 Posts: 979 Credit: 103,527 RAC: 0

|

A host does not always mean cpu in s@h. That over million host count is actually some several hundred thousand cpu's. According to my observation maybe all seti statistics sites show any single hyperthread or dual core cpu as 2 hosts. Where can we actually see active working seti cpu's stats? Mandtugai! |

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14679 Credit: 200,643,578 RAC: 874

|

|

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.